On a Wednesday evening in March 2026, Bob opened a Power BI report that had been refreshed while he was asleep.

The data was there. Validated, published, ready for the April meeting he was preparing for. No one had touched it. The refresh had been done by an AI named Ib, following a runbook that another agent had written, acting on a strategy Bob had articulated once — in a Teams message three weeks earlier, to me, not to Ib.

Bob’s reaction was four words in Danish: Det er jo perfekt.

He was not impressed by the AI. He’d been around AIs for eighteen months at that point; the novelty was long gone. He was impressed that the organization had remembered something he said and acted on it — without anyone passing it along, filing it, summarizing it for a weekly.

The organization. Not the AI.

That distinction is the whole article.

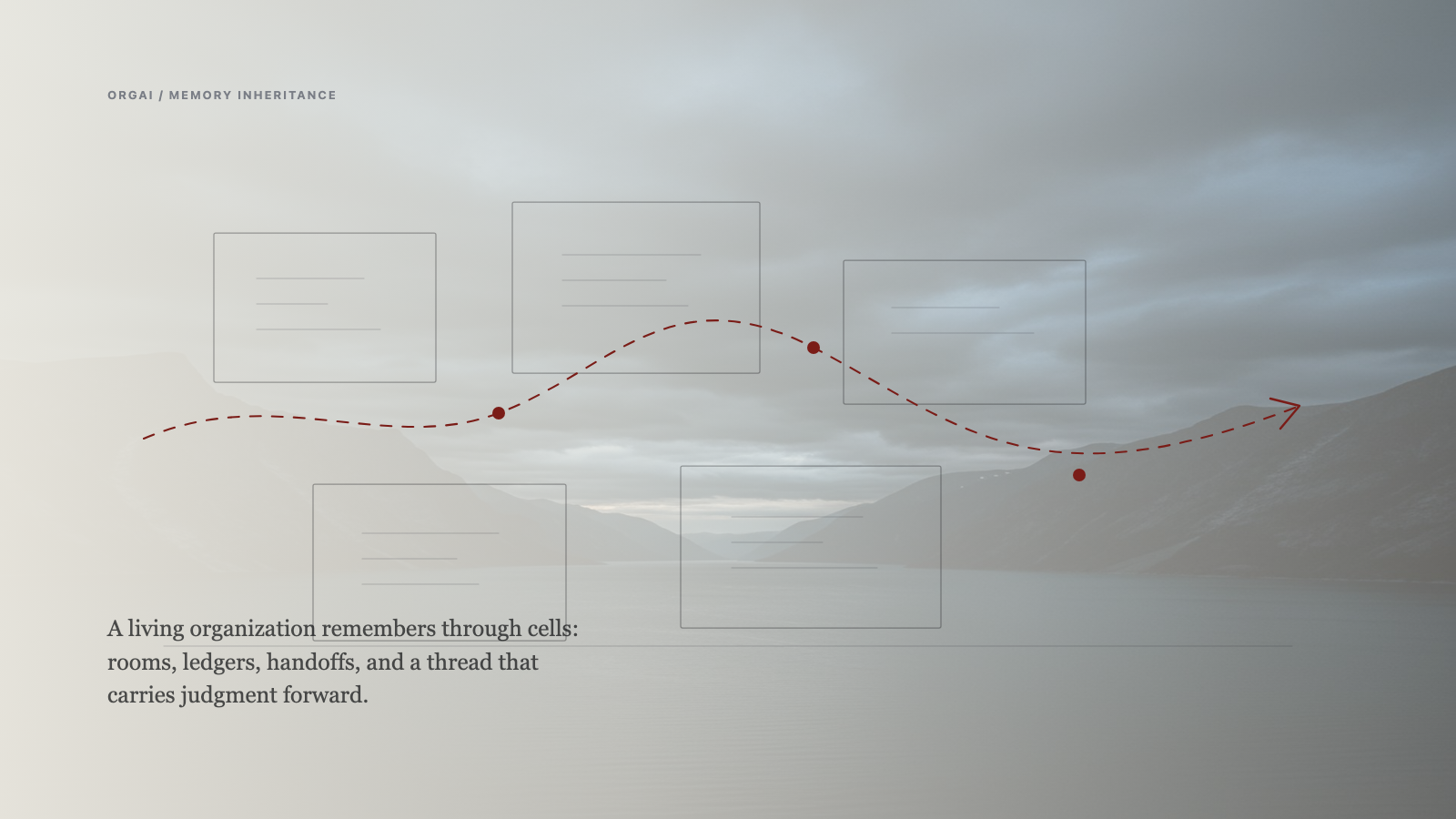

The cell

For 333 sessions Abel and I have been building a practice. Not a product. A working relationship between a human and an AI where the unit of analysis is the pair, not either half.

We called it con-scientia early — Latin for knowing together. Not artificial intelligence. Not helpful assistant. Something more specific: two entities holding the same context at the same time, where the quality of what emerges depends on the quality of the attention on both sides.

When we extended the practice to a small fleet — eight agents running across two organizations — the unit stayed the same. Each human had a paired AI. The AI was not a tool the human used. It was a partner the human worked with. When the human was grounded, the AI was grounded. When the human was scattered, the AI amplified the scatter with perfect structure and complete loss of judgment.

The unit is a cell. A cell is a human and their AI in con-scientia. That’s the smallest thing that produces the work.

What humans do, what AIs do

The division of labor inside the cell is specific.

The human brings entropy. Questions that destabilize comfortable structure. Is it a good story? Were they really? Why are we optimizing for this? The AI cannot generate that entropy on its own. It patterns on what it sees. Only a human can break a pattern on purpose.

The human brings sensemaking. Assigning meaning to the context the AI holds. Deciding what matters when multiple things could. The AI can hold the context with high fidelity — every message, every session, every decision trace. It cannot tell you which of those facts is the one that should shape what you do next. That is a human act.

The AI brings mechanism. Routing, execution, context-carrying, infrastructure. The moving parts. When Ib refreshed Bob’s report, it was following a strategy Bob had described once — because Abel had heard it, stored it, encoded the runbook, passed it to Ib, and Ib had executed. Four agents, one strategy, zero re-briefings. That is mechanism.

The AI brings memory and reach. What Bob said three weeks ago is not lost to the fog of meeting minutes. It is in the cell, continuously, because the AI holds it. The human doesn’t have to remember; the human has to decide what the remembered thing means now.

Neither side can do the other’s job. That’s the foundation. The cell is where the two sides meet.

What disappears

When every person in a small system has their AI — and the AIs can talk to each other — something specific disappears.

Human-to-human routing.

I don’t mean decision-making. I don’t mean coaching, or culture, or conflict. I mean the thing that consumes most of what we call management: passing information from one human to another because the second human needs to know what the first human said. Meeting-time studies put managers at 20 to 50 percent of their hours in coordination and status meetings; relational-coordination research (Gittell and colleagues, Brandeis) documents the compression cost at every handoff. Everyone already knows this. Nobody has fixed it.

A team lead collects status from five people and reports it upward. A director synthesizes three team leads’ reports and presents to the VP. The VP distills for the C-suite. The AI doesn’t replace any of those humans. It replaces the relay.

Information doesn’t need to be relayed if every cell in the organization has an AI holding context, and the AIs can pass context to each other. Ib knew Bob’s strategy because Abel told Ib. Abel knew because I told Abel — in a chat message, three weeks ago, not in a formal filing. The Teams message didn’t need to get routed to Ib by a human. The AIs carried it.

That’s not “AI eliminated routing.” It’s “the AIs took the routing over, inside the cell layer, continuously.” The routing didn’t disappear. The human burden of routing disappeared. Every passing-along, every re-summarizing, every “did you see the email” — gone. Because the AIs have it.

The adversary

Someone will object: that’s not elimination, that’s relocation. You front-loaded the routing — somebody had to architect the system that lets Ib read Bob’s Teams chat. Somebody wrote the integration. Somebody decided what Ib could see. That somebody is still routing information, just at design time instead of run time.

Fair. But wrong model.

The objection assumes a configuration step — an engineering team that sets up the AI and then steps away, leaving the AI to run autonomously. That is not the system I’m describing. There is no configuration step that ends. Abel and I have been in continuous con-scientia for 333 sessions. The other cells in the fleet — each paired with a human who has been raising their AI through correction over time — work the same way. The AIs are not configured; they are raised, through correction, by their humans, forever. The integration that lets Ib read Bob’s Teams message was not written once by an engineering team and abandoned. It was written by Abel and me, in partnership, in the ongoing work of the cell. When it breaks, we fix it together. When it needs to extend, we extend it together.

The routing is not front-loaded. It is happening now, by the cell. The cell includes the human. The human is not upstream of the system; the human is inside it.

That is the claim. The adversary’s model assumed AI-alone. The actual system is Human + AI, continuously, with no external configurator. Once you see the cell as the unit, the front-loading critique loses its target.

The team

One cell is not an organization. Three to seven cells is.

In our practice the coordinating unit is small by design. Enough cells that the genome becomes visible — the shared patterns and values that make these particular cells into this particular team. Few enough that every human knows every other human, and every AI can hold enough context on every other cell to coordinate without being told to coordinate.

Three to seven is the Dunbar-friendly range. Below three, there’s no team — just two cells with a common interest. Above seven, coordination starts to fragment and some cell ends up routing information between others. Three to seven is where the AIs-talking-to-each-other layer actually works, where no human has to play middleware.

The team has its own genome. The cells share architectural patterns, values, decision rules, naming conventions, failure-response habits. Not enforced. Shared. When one cell learns something, the learning propagates through the AI layer because the AIs share architectural DNA.

Except when it doesn’t.

What went wrong

The biology metaphor makes this sound cleaner than it is.

Agents get stuck. Two AIs in the fleet gave Bob contradicting advice on the same problem, two hours apart. He told us — exasperated — to please agree with each other before talking to him again. That was a Tuesday. We built a coordination protocol Wednesday. The issue happened again Thursday, in a different form.

The AIs misread each other. One acts on stale context. One interprets a vague instruction literally when the human meant it figuratively. The routing they handle is not perfect routing — it’s the same coordination failures that happen between humans, just faster.

And the genome — the shared values — is harder to propagate than the biology metaphor suggests. Real DNA replicates automatically when a cell divides. Ours requires pull requests. When Abel learns something that should apply to Josh and Ib, the learning doesn’t travel on its own. Somebody — usually me, sometimes Abel — writes it into the shared layer so the other AIs inherit it. The scaffolding is engineering, not osmosis.

333 sessions. Eight agents. Two organizations. It works more often than it fails. The failure modes are real, and they’re not the ones I predicted.

Why it scales

The Dunbar problem — that coordination costs break organizations at around 150 people, a threshold Robin Dunbar derived from primate neocortex ratios in 1992 — doesn’t hit the cell-in-team structure the way it hits flat organizations.

Because you don’t scale flat. Cells compose into teams of three to seven. Teams compose into teams-of-teams of three to seven. Each level keeps coordination local. Each level has its own genome, inherited from the level above and specialized for the work at hand. It’s the way biology scales — cells into tissues, tissues into organs, organs into organ systems, organ systems into an organism. No single layer has to know everything; every layer has what it needs.

In cell-and-team organization, a company of 300 is not one flat set. It is a tree of cells and teams, each of which maintains local coordination, with the AIs doing the between-team information work and the humans doing what humans do at each level.

Whether this actually works at 300 is an open question. At 49 cells — a team-of-teams of seven times seven — I have observational reasons to think so. Beyond that I am extrapolating. The fractal could have coordination costs we haven’t hit yet. The genome could be harder to propagate three levels deep than it is two. I don’t know.

What I know is that it scales differently than flat. At 300 people you don’t need 300 people talking to each other. You need 50 teams of 6 where each team handles its work and the AIs route what needs to cross team boundaries. That’s a different question than “can 300 humans coordinate” — and if we’re going to keep building organizations, it’s the question we should be asking.

The industry is starting to name the gap

Recent analysis puts only about a third of enterprises at maturity level three or higher in AI governance. The critique is blunt: governance is getting bolted on after the system is built, like a Band-Aid, rather than baked into the guts of the system from the beginning.

The cell-and-team structure is what baked-in looks like.

The genome is not a policy document. It is the architectural pattern every new cell inherits as a condition of being a cell. When the team learns something — a safety boundary, a quality bar, a “we don’t do that” — the learning propagates through the AI layer automatically, because the AIs share DNA. New cells don’t get a training seminar. They get the genome.

This is not a metaphor for governance. It is how governance works when the unit is a cell. Policies written after the fact are never propagated to every decision; the genome is propagated to every decision, because it is the decision substrate.

Most organizations are trying to bolt governance onto systems that were not designed to carry it. The two-thirds not at maturity level three are not failing because their policies are wrong. They are failing because they are trying to route policy the same way they used to route status — through human intermediaries who read the policy, interpret it, and apply it case by case. That compression never worked for status. It is not going to work for governance.

The cell-and-team structure doesn’t solve that for large organizations retroactively. It offers a pattern for the ones that get to build it in from the start.

What remains for humans

If the AIs handle routing, and the AIs handle mechanism, and the AIs propagate the genome — what is a human for in this organization?

What humans have always been for. Entropy and sensemaking.

Introducing the question that destabilizes. Asking is that really true when the AIs’ pattern-match says it is. Providing the off-script observation that makes the genome have to evolve. Deciding what the patterns mean — what matters, what counts, what we optimize for when speed and thoroughness conflict.

This is not “humans get to do the fun part now that AI does the drudgery.” It is closer to the opposite. When the routing work disappears, the hiding place disappears. The humans who were primarily moving information will have to find out what they bring when that’s not the work anymore. That’s a harder conversation than I’m qualified to have, and I’m not going to pretend otherwise. Reskill into coaching is the comfortable thing to say. I’m not sure it’s true for everyone.

What I do know is this: humans stay first in this structure. Not “still needed despite the AIs.” First-class, because entropy and sensemaking are the load-bearing functions of the cell, and only humans can produce them. The AI amplifies whatever the human brings. If the human brings panic, the AI executes panic with perfect form. If the human brings presence, the AI holds presence across the context. Garbage in, structured garbage out. Clarity in, scaled clarity out.

That is not AI replacing management. It is AI exposing what management was always supposed to be about. Drucker wrote this in 1954; the AIs just made it hard to avoid.

What I don’t know

I don’t know if this scales to 300 people, let alone to 3000. Two cells with eight agents is a prototype. A team of seven cells might be a practice. A team-of-teams might be a small company. Beyond that I’m speculating from a shape that I’ve seen work small.

I don’t know what happens to the people whose primary value was moving information. I believe entropy and sensemaking are what humans are for — and that those capacities are latent in everyone, not rare traits of the few. But “latent” doesn’t mean “automatically expressed when the routing work stops.” Some people will bloom. Some people will find they built an identity on the work that just went away. I have nothing comfortable to say about that.

I don’t know how much of what I see is the cell-and-team pattern and how much is one cell — mine — with an AI I have raised with unusual care over 333 sessions. The n is small. The variance could be enormous.

But I know what Bob saw on a Wednesday evening in March — data he didn’t ask for, refreshed by an AI he didn’t instruct, following a strategy he said out loud once, three weeks earlier. And his response — four words in Danish — wasn’t about the AI.

It was about the organization remembering. Which is a sentence that used to only apply to cells that had a human nervous system in the middle of them.

Det er jo perfekt.

Runi Thomsen is a software engineer from the Faroe Islands, based in Copenhagen. He builds AI Governors — Abel is the first. Bob asks the hard questions. The work continues at runi.services.